AI assistants are changing how people discover things

Search is changing. More and more people now ask questions directly to AI assistants like ChatGPT, Gemini, Perplexity, or Google AI Mode instead of browsing dozens of websites. Instead of scanning many search results, users often receive a single synthesized answer that summarizes information from across the web. The assistant reads content from multiple pages, extracts relevant details, and combines them into a response that feels immediate and complete.

Table of Contents

This shift is creating a new discovery layer. In this environment, visibility no longer depends only on ranking pages in traditional search engines. It also depends on whether AI assistants include your brand when generating answers. Many people are referring to this new landscape as AI Search, AI Visibility, or Generative Engine Optimization (GEO), and understanding how these systems build responses is becoming increasingly important.

AI answers are built from sources

AI responses do not appear out of nowhere. Behind every answer there are web pages that the system has used as references. These pages can include social media, blog posts, comparison articles, product documentation, tutorials, industry guides, and many other forms of content. Together they form the informational foundation that the AI assistant uses to build its response.

Sometimes these pages appear explicitly as citations or references that users can open. In other cases they are simply used to generate the answer without being shown directly. Either way, the principle remains the same: the content that AI systems rely on strongly influences the answers they produce. If the sources used by an AI assistant mention your brand or cite your website, the likelihood that your brand appears in the final response increases significantly. If those sources only mention competitors, the generated answer will likely reflect that imbalance.

Note: LLMs can drive clicks, traffic, and even app downloads (something you can observe in tools like Google Analytics or App Store Connect), but they are not designed to optimize for that. In practice, the real impact of AI visibility often appears later through brand searches on Google, the App Store, TikTok, or other discovery platforms. As a result, attribution becomes even more complex.

Visibility in AI starts with visibility in sources

This dynamic introduces an important difference between traditional SEO and AI Search. In classic search, the primary goal is to rank your own page as high as possible. In AI-driven discovery, another layer appears because your brand also needs to exist inside the content that AI assistants rely on to construct their answers.

Consider prompts such as “best project management tools,” “top AI visibility platforms,” or “best marketing analytics tools.” When answering these types of questions, AI systems often rely on existing content such as comparison articles, software roundups, expert recommendations, and detailed industry guides. If your brand appears inside those pages, the chances of appearing in the AI-generated response increase. If it does not appear in those sources, the AI assistant may simply never encounter your brand when building its answer.

For this reason, understanding which pages AI systems use as sources becomes essential for brands that want to improve their visibility in AI-driven search.

And, as it has always been, in the end it all comes down to building a brand.

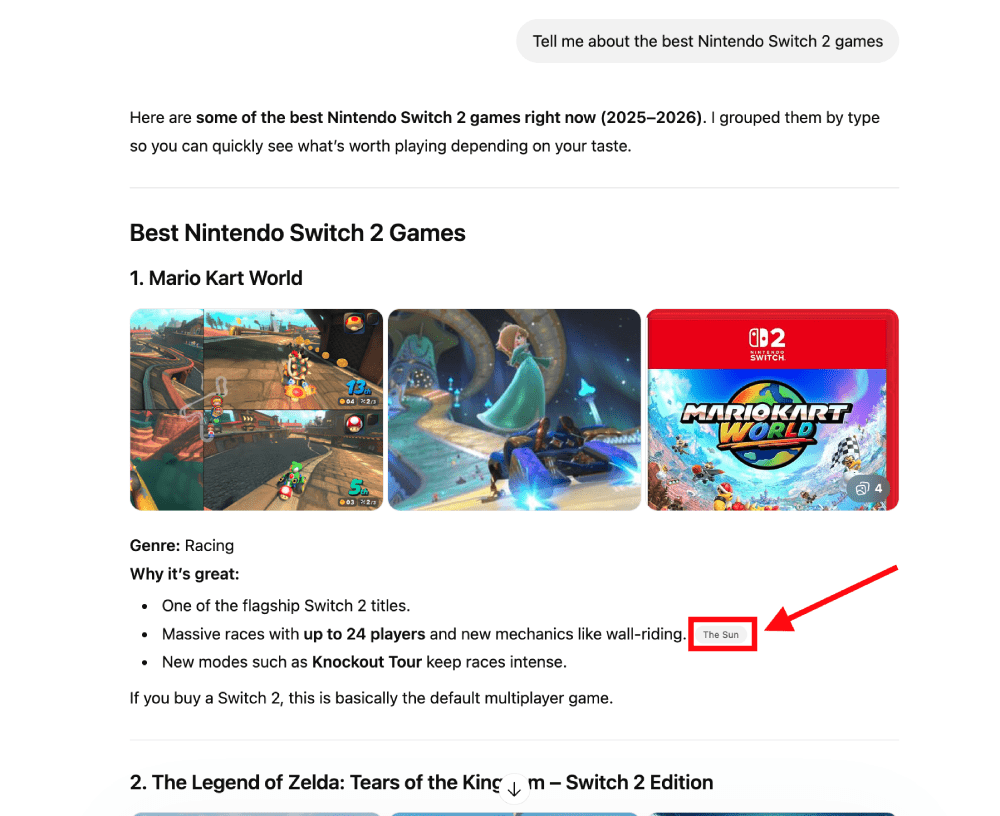

Citations can also generate traffic

Another important aspect of sources is that many AI assistants now display them directly in their answers. In some platforms they appear as references, while in others they are shown as clickable citations that allow users to explore the underlying content.

When your website appears among those sources, several benefits emerge simultaneously. Your content becomes part of the explanation that the AI assistant provides, which reinforces authority and credibility. Users also see your domain while reading the response, which increases brand visibility even if they do not click immediately. In many cases, users can open those citations to learn more, which means that sources can also generate direct traffic from AI answers to your website.

This dynamic means that companies are no longer competing only for search rankings. They are also competing to become trusted sources used by AI systems when answering questions.

You can check this manually (but it doesn’t scale)

If you want to explore how your brand appears in AI answers, you can start by testing prompts directly on different LLMs. For example, you might open ChatGPT or Gemini and ask questions such as “What are the best project management tools?” or “Which SEO platforms should I use?” After reading the response, you can check whether your brand appears in the answer or whether certain websites are referenced.

You can repeat the same experiment on platforms such as Perplexity or by triggering Google AI Overviews with relevant search queries. Testing multiple prompts and industries can help you understand which brands and sources appear most frequently. This approach is useful for gaining an initial understanding of how AI assistants construct answers.

However, manual exploration quickly becomes difficult to scale. As the number of prompts grows and responses vary across models, languages, and locations, tracking everything consistently becomes extremely time-consuming.

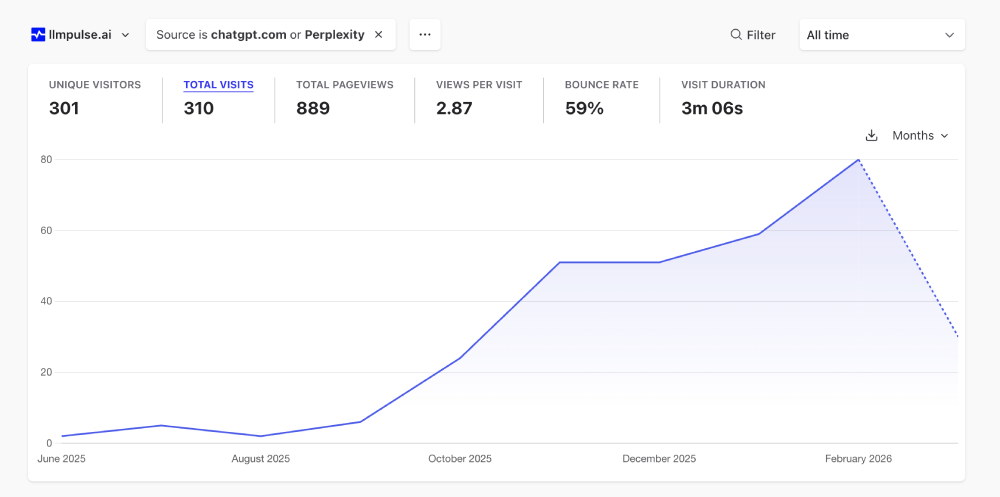

Automated tracking with LLM Pulse

Because manual tracking does not scale well, many teams use specialized platforms to monitor how brands and websites appear in AI responses. Tools such as LLM Pulse automate the process by running prompts across multiple AI models (ChatGPT, Gemini, Google AI Mode, Google AI Overviews and Perplexity) and collecting the answers systematically:

The process typically starts with creating a project for your brand, including your domain name and industry context. From there, you define prompts that reflect how users might search for tools, services, or companies in your market. The platform runs those prompts regularly across different LLMs and gathers the generated responses automatically.

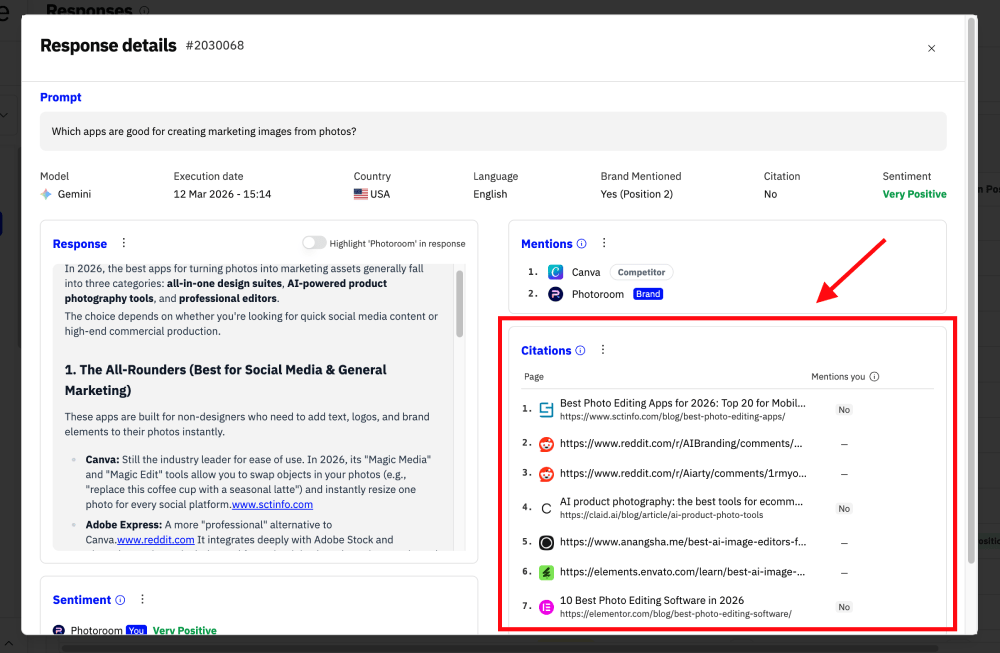

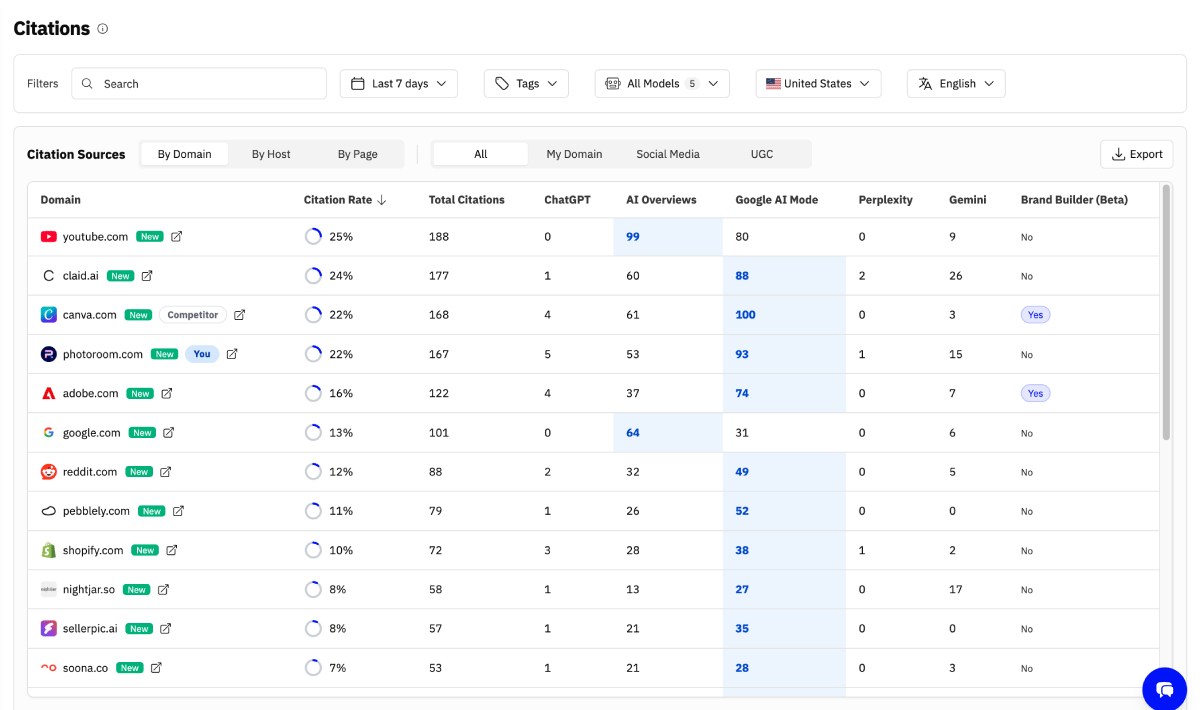

Each response can then be analyzed to identify which websites are used as sources. Instead of reviewing hundreds of answers manually, teams can immediately see which domains AI assistants cite most often, which sources dominate specific prompts, and whether their own site appears among them.

From individual responses to visibility metrics

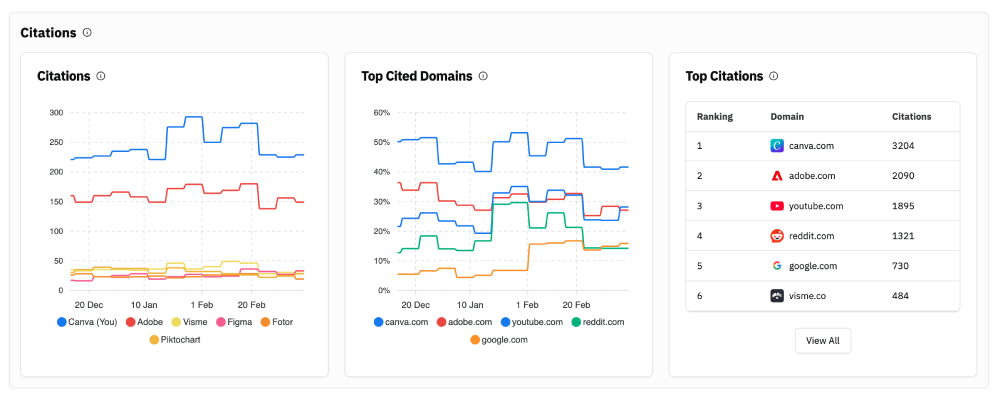

When enough responses are collected, citation data can be aggregated into meaningful visibility metrics. These insights help teams understand how often their website appears as a source, which competitors dominate certain prompts, and how their presence evolves over time:

The data also allows companies to identify which prompts generate visibility and which types of content tend to appear most frequently in AI answers. By examining individual responses in detail, teams can see exactly which sources were used and how brands are positioned within those answers. This helps move beyond isolated examples and understand how LLMs actually represent an entire market.

Why monitoring sources matters

AI assistants are becoming a major interface for discovering information, products, and services. If the sources used by those systems consistently mention your competitors while ignoring your brand, their perspective will shape the answers that users see.

Monitoring citations helps companies understand whether their content participates in the information layer that AI assistants rely on. It reveals which websites influence AI responses, which competitors appear most often, and where new visibility opportunities exist. Instead of relying on occasional manual checks, automated monitoring provides a structured and scalable way to understand how AI assistants represent your brand and your industry.

FAQ

Why should brands track citations in AI assistants?

Citations reveal which websites AI systems rely on when generating answers. If your domain appears among those sources, it increases the likelihood that your brand will also appear in AI responses and improves your overall visibility in AI-driven discovery.

Do AI assistants really rely on web sources?

Yes. Most AI assistants construct answers using information extracted from web pages such as articles, documentation, guides, and product pages. These sources influence the content of the response.

Why do sources influence brand mentions?

If the web pages used by an AI system mention your brand, that information becomes part of the context used to generate the answer. As a result, the probability that your brand appears in the final response increases.

Can citations in AI answers be tracked automatically?

Yes. Platforms like LLM Pulse analyze AI-generated responses across multiple models and identify which websites appear as sources, how frequently they are cited, and how brand visibility evolves over time.

How does citation tracking relate to GEO or AI Search?

Monitoring sources helps companies understand whether their content is part of the information layer AI assistants rely on to generate answers. This insight can inform content strategies and strengthen visibility in AI search.